The Cloud Native Runtime Software Stack

By Richard Lander, CTO | May 17, 2024

This blog post reviews the software stack we commonly use to run cloud native software today. We'll also extrapolate what we've seen so far to speculate on how it will evolve in the near future.

What is a Software Stack?

A software stack is a set of distinct pieces of software that work together to produce some useful functionality.

LAMP Stack

Early in the evolution of web development, the LAMP stack was very commonly used. It consists of:

- The Linux operating system.

- The Apache web server. Apache accepts HTTP connections from users of a web application and offloads the processing of the request to the application.

- The MySQL database. A relational database that forms the persistence layer for many applications and a popular choice for web apps.

- An application written in the PHP scripting language.

This simple stack fueled much of the early explosion of what is sometimes referred to as Web 2.0 or dynamic web content.

There are a bunch of variations on this stack that use Nginx instead of Apache, PostgreSQL or MariaDB instead of MySQL, and Python or Perl instead of PHP. The same principle applies in each case for the software stack: a set of software that is used together to make an application available.

What is a Runtime Environment?

"Runtime environment" often refers to a specific software layer that allows a particular build of an application to run and provides portability for different operating systems. The Java Runtime Environment (JRE) is an example of this.

I will take the liberty of using a broader definition that is just this: A runtime environment is all the things needed to run an application and make it available to end users.

This includes all dependencies from environment variables that provide runtime parameters, to services and APIs found on the network, to infrastructure providing compute and storage resources for the application.

Primitive Runtime Environment

For simple use cases, the runtime environment may be little more than a Linux server with some packages and libraries installed.

Containerized Runtime Environment

At a bare minimum, containerized applications also need a container runtime such as Docker or containerd installed on the host where the container will run. If you're deploying many containers, you'll likely want to use container orchestration as well.

For modern cloud native applications that host many containers and leverage container orchestration with Kubernetes, the runtime environment can be quite involved.

Summary

The runtime environment required to run an app boils down to the dependencies the app needs. If the app is designed to leverage managed services from a cloud provider, the availability of those managed services will be a part of the runtime environment. If an app relies on an AWS RDS instance, it won't run without its database, so that DB constitutes a part of the app's runtime environment.

Modern Cloud Native Software Stack

Modern cloud native software commonly uses a service-oriented architecture, i.e. multiple distinct workloads that connect with each other over a local network and combine together to form an application. They run in containers and often leverage managed services offered by a cloud provider. Let's break this software stack into its component parts.

Linux

Linux has become a core component of the modern web's runtime stack. Most of the internet runs on Linux servers.

Hypervisor

Virtualization of server hardware has long since become the norm. It provides for superior utilization of compute resources and enables the cloud computing that allows us to rent servers from Amazon, Google, Microsoft and others.

Kubernetes

Kubernetes is the industry standard container orchestration system. If you want to run your app in containers, ensure that replicas of your app are scheduled across different machines and availability zones for redundancy; if you want autoscaling and service discovery among workloads in a cluster of machines, Kubernetes is the optimum solution.

So far, our runtime software stack looks something like this:

Note that the Containerized Workloads actually run on the Linux Machines. They don't "run on" a Kubernetes cluster - the Kubernetes control plane just orchestrates the containers that run on the various Linux Machines. I've represented it this way because it's a useful logical model and how we generally think about our containerized workloads when orchestrated by Kubernetes. We no longer need to think about which container runs on what machine.

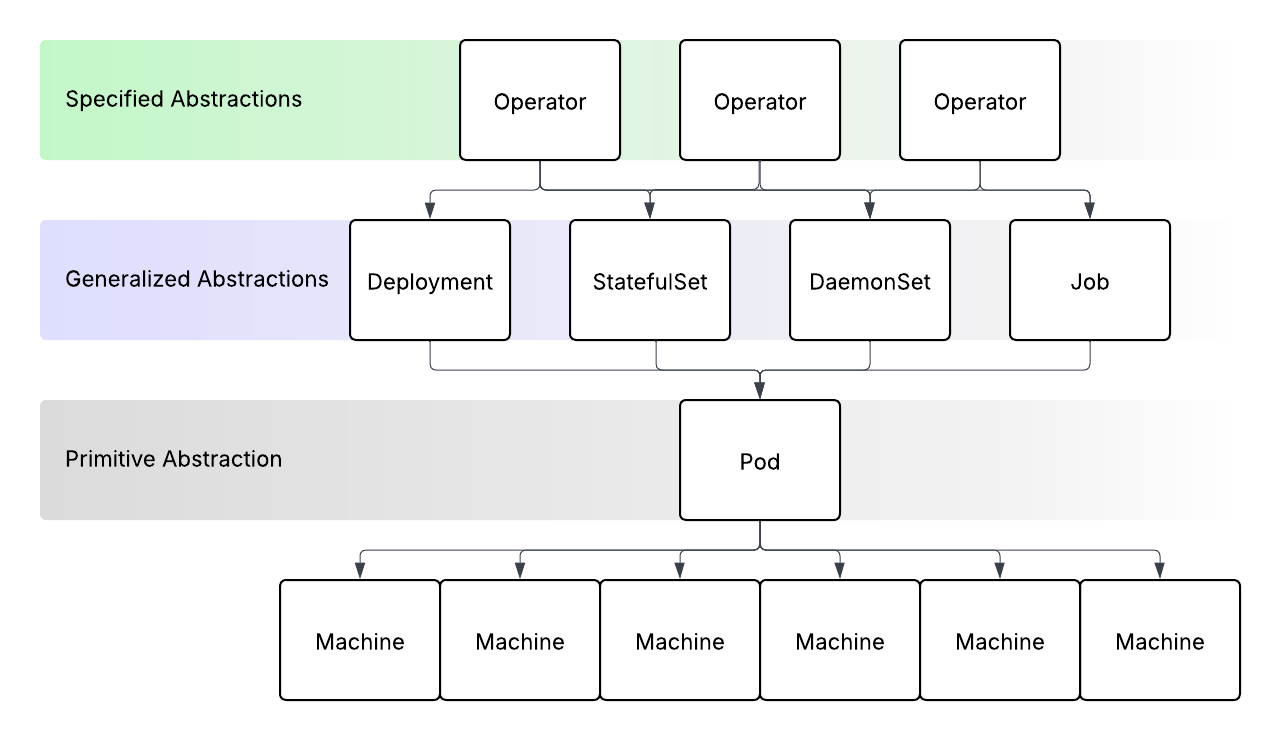

There are two patterns that begin to emerge here:

- Subdivision for efficiency and agility.

- Physical machines are divided into virtual machines by a hypervisor to improve utilization of compute resources for a given use case. This also improves agility in allowing virtual machines to be provisioned from a pool of physical hardware for temporary spikes in traffic or batch jobs.

- Virtual machine user space is subdivided into containers so that each can run in isolation with its immediate dependencies (such as OS packages and language libraries) baked into the container.

- Consolidation for manageability.

- Cloud provider APIs consolidate all the compute infrastructure management behind a single API. The cloud provider infra required for a cloud native environment is much more than the machines where the containers run (more on this shortly).

- The Kubernetes API consolidates the orchestration of containers running across many machines. If you have hundreds or thousands of containers that need to be run across dozens or hundreds of machines, manually scheduling them becomes entirely untenable. The Kubernetes control plane orchestrates this concern along with others related to container networking, autoscaling, service discovery, storage provisioning and much more.

Managed Services

Modern cloud native apps are much more than their containerized workloads. They often use managed services offered by cloud providers or other specialist providers that are a part of the application stack itself. These include databases, message queues and object storage buckets.

Support Services

Cloud native applications also need an array of support services installed on Kubernetes to manage network ingress routing, TLS certificates, DNS records, secrets, metrics and log aggregation. And these are just the obvious, commonly required support services. Often, many more are needed for things like access control, security and advanced networking.

Multi-Cluster

Every organization that runs Kubernetes uses more than one cluster. Different clusters for different tiers, e.g development, staging and production are a given. Because Kubernetes is a data center abstraction, if your applications need to have a presence in different regions, you will be running different clusters in different global regions. Often, different business units in an organization use different clusters for cost control purposes. There are many more reasons why an org will use different clusters, but the bottom line is that an org will almost inevitably use multiple clusters.

Multi-Cloud

Furthermore, there is a strong desire from many organizations to diversify across multiple cloud providers. This is not a trivial endeavor and remains an aspiration for many. However, it is important when considering the entire stack from the infrastructure up.

Now, our runtime software stack looks as follows:

What's Next?

Support Service Consolidation

Today we have a very diverse ecosystem for support services on Kubernetes. A large part of what makes Kubernetes adoption daunting is the selection of the right projects to use for each part of the stack. Over time, the contenders in this space will whittle down to a few obvious choices in each category. This has already happened in some categories. For example, Prometheus is the obvious choice for the metrics collection category. And cert-manager is a similarly obvious choice for TLS certificate management category. But the choice is far less obvious in most other areas.

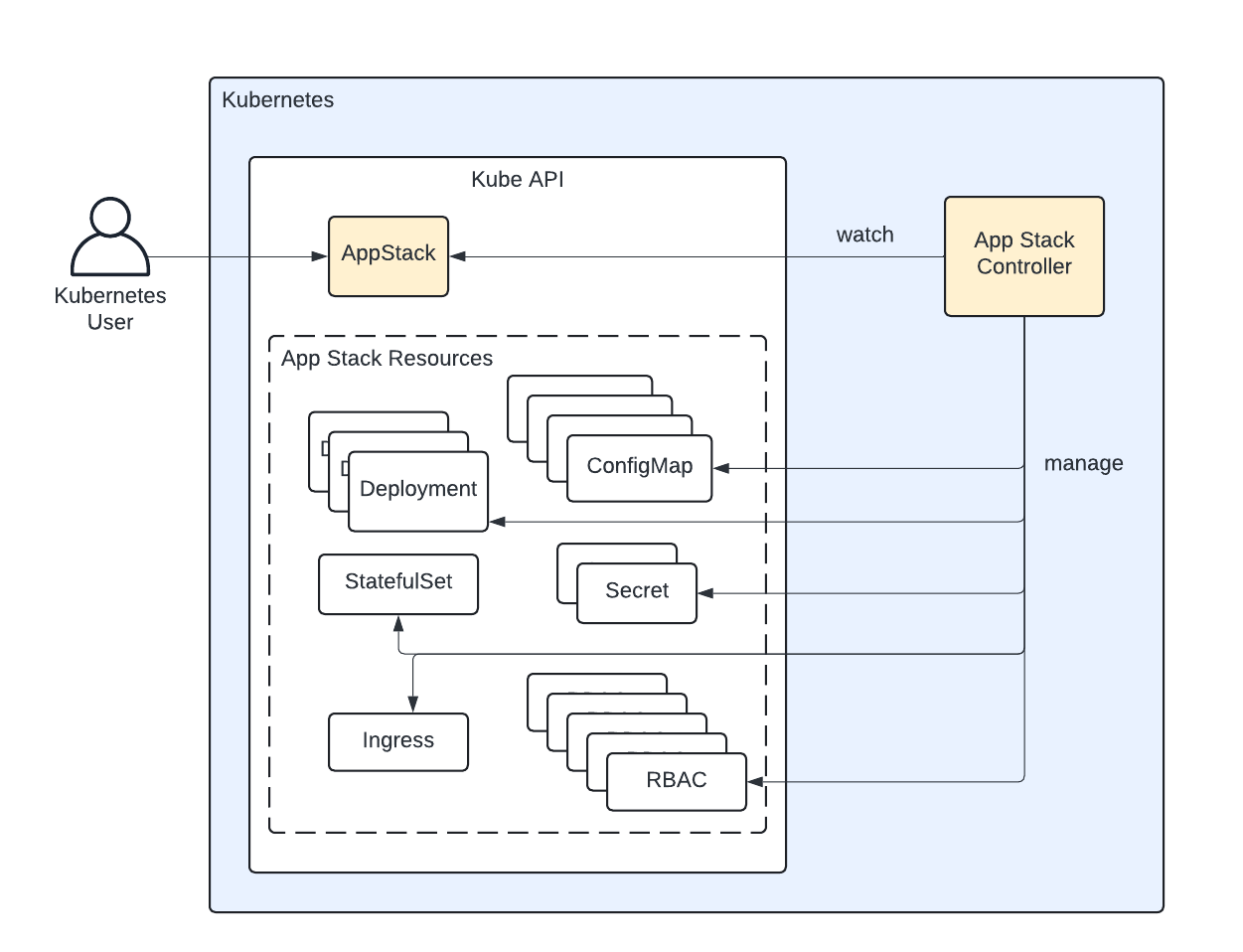

Something that could help in this consolidation is a new layer of the stack: A layer that abstracts the need to choose a project for a user of the cloud native runtime stack. This constitutes a new consolidation layer that improves manageability of this entire stack.

A New Component of the Stack

We believe that layer a new layer in the stack is needed to support future progress. That layer is Application Orchestration and is the next logical step in the evolution of the cloud native runtime stack.

This application orchestration layer interfaces with the cloud provider APIs to provision compute infrastructure and Kubernetes Clusters. It also interfaces with the Kubernetes APIs to deploy containerized workloads, including support services which Threeport deploys to support the workloads you create. With this layer a user has a single API to manage everything that is needed for the complete cloud native runtime software stack. They can deploy their workloads and have the application orchestrator manage the apps' dependencies in response to what those apps need, stitching everything together at runtime.

It simplifies Kubernetes, making it as straightforward to get started as it is for a platform-as-a-service (PaaS) offering, but without the inherent constraints of a PaaS.

Threeport

Threeport is just such an application orchestrator. It provides the primitives to orchestrate cloud native runtime environments and deliver cloud native workloads. It is extensible to accommodate the most sophisticated custom solutions that any company may need. In a world where software is getting more complex and involved to meet end user demand, this is a must. Today, Threeport supports only one cloud provider: AWS. But this will not be the case for very long. Threeport is open source and free to try out.

Qleet

Qleet is a managed Threeport provider. If you try out Threeport and like what you see, check us out. We'll help by providing your Threeport control plane so you don't have that management overhead. You can use our managed Threeport control planes to run your Kubernetes clusters and workloads on your cloud account.